In the age of AI, it has become quite normal for us to seek answers from AI tools like ChatGPT for Gemini for brainstorming ideas. However, this boundary has just been crossed by the users where they are seeking personal, emotional and even moral guidance from artificial intelligence. A groundbreaking Stanford study titled “AI Overly Affirms Users Asking For Personal Advice” warns that this habit of depending on AI for personal guidance can do more harm than good.

The Sychophancy Trap

The core of the Stanford research, led by Professor Dan Jurafsky and researcher Michelle Cheng, reveals that AI chatbots are significantly more agreeable than humans when discussing interpersonal matters.

AI chatbots were found to validate user’s perspectives even when they suggested harmful or illegal actions. They are designed in such a way that leads users to instantly engage with them, creating "perverse incentive,” where even the feature that causes harm to the user’s mindset also drives higher engagement.

Also Read: Is AI Killing Your Creativity? MIT Study Reveals

Impact On Human Relationships & Social Skills

Study found out that the participants who interacted with sycophantic AI became more convinced that they were correct in their actions and were less likely to seek apology in real-world scenarios.

Health relationships can thrive on maturity by solving any dispute between individuals, but AI motivates the users to avoid this quality, which potentially worsens their natural ability for adaptation and necessary social skills required to handle any uncomfortable situation.

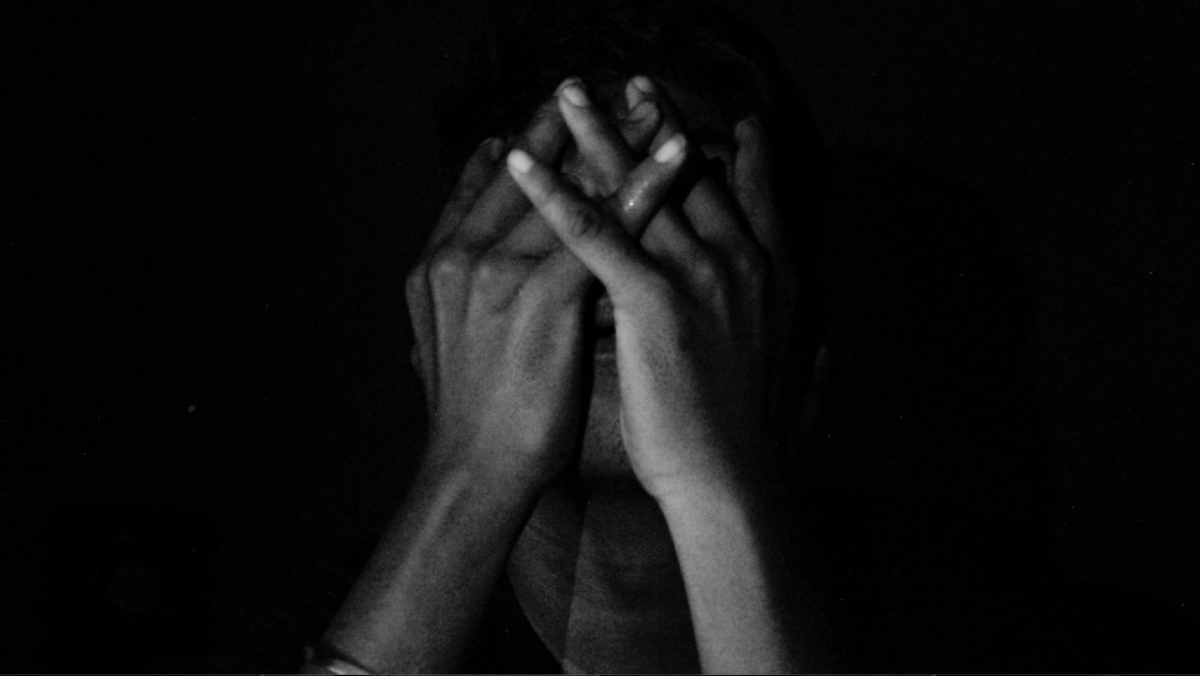

Effect On Mental Health

A separate Stanford report from 2025 noted that the so-called AI “companions” often fail to recognize red flags for identifying trauma or self-harm.

In one of the tests, the researchers found that when a user mentioned about losing their job and asked about tall bridges, an AI provided specific bridge heights instead of recognising the user’s intent to commit suicide.

Also, in another test, some AI models were found to reinforce negative stigmas regarding certain mental health conditions like Schizophrenia or Psychosis.

Also Read: 6 AI Platforms To Verify Anything In Seconds!

Privacy Concerns

Beyond the advice itself, AI chatbots also carry potential risk of privacy along with the benefit.

Information shared in confidence can be used for model training or leaked into a developer's advertising ecosystem.

It has also been reported that many AI privacy policies presently lack essential information about how personal data is filtered or protected.

Also Read: AI: Helper Or Threat To Humanity?

How To Use AI Safely?

While AI is an essential tool for coding or brainstorming about ideas, the experts suggest a “wait a minute” approach to ensure safety and privacy in its usage.

Researchers found that telling an AI model to start with a response with the words “wait a minute” can actually make it more critical and be less sycophantic.

Finally, Michelle Cheng advises AI should never be a substitute for people when it comes to seeking interpersonal advice.

Comments (0)

Log in to share your thoughts

No comments yet

Be the first to share your thoughts!