Above: Dario Amodei, the CEO of Anthropic (left) and Peter Hegseth, United States Secretary of War (right). Photo by TechCrunch (CC BY-2.0) on Wikimedia Commons

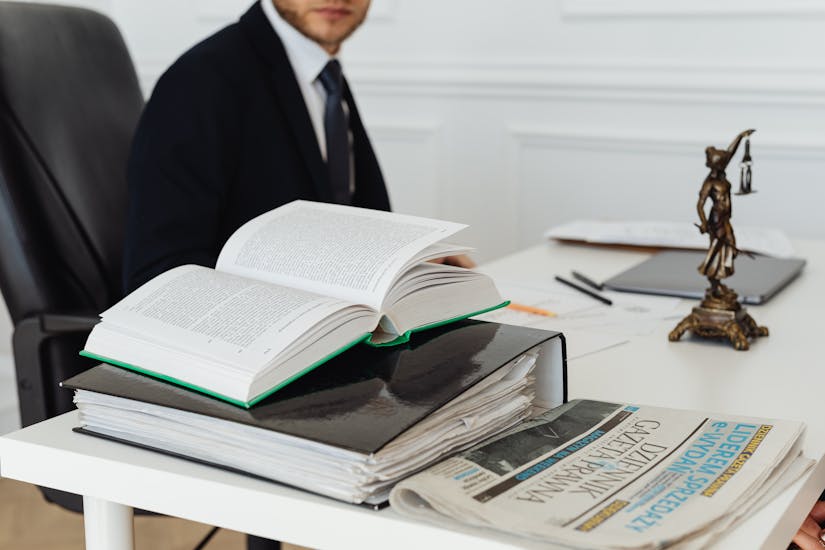

The artificial intelligence firm Anthropic launched a historic legal battle against the U.S government. The company filed two distinct lawsuits—one in the U.S. District Court for the Northern District of California and another in the D.C. Circuit Court of Appeals. Anthropic requests to block the Pentagon from enforcing a "supply chain risk" designation. This dramatic escalation follows a month-long feud over how the military is permitted to use the company's Claude AI models.

But why is Anthropic suing the Pentagon? The core of this dispute lies in Anthropic's refusal to grant the Department of Defense (DoD) unrestricted access to its technology. During contract renegotiations over a $200 million deal, the Pentagon demanded that Anthropic remove its "hard limits" on deploying AI for fully autonomous lethal weapons.

Furthermore, the military sought the ability to use Claude for "all lawful purposes," which Anthropic interpreted as enabling mass domestic surveillance of American citizens. Anthropic executives argued that current AI models are not yet reliable enough for autonomous warfare without human oversight, and such surveillance would violate fundamental human rights and the company's founding mission.

Also read || State of the Union 2026 Ignites AI Boom

What does Pete Hegseth have to say about it?

%20.jpg)

Pete Hegseth, Secretary of Defense, issued a harsh ultimatum to Anthropic in late February 2026, demanding that Anthropic either drop AI safeguards on autonomous weapons and surveillance or face blacklisting as a "supply-chain risk." In a February 24 meeting with Anthropic CEO Dario Amodei, Hegseth set a Friday deadline at 5:01 p.m. ET to remove Claude's "hard limits" for military use, threatening contract loss or the Defense Production Act.

On the day of the deadline, Anthropic formally rejected the Pentagon's "final offer," noting that even the revised language would allow safeguards to be disregarded at will. The Department of Defense could not take this decision well, and officially designated Anthropic a "supply-chain risk" last week. President Trump subsequently directed all federal agencies to cease using Anthropic technology, initiating a six-month phase-out period.

In public statements and an X post, Hegseth accused Anthropic of prioritizing ethical constraints over national security, insisting no private company can dictate military use of AI and vowing to end "woke" restrictions. As a result, the Pentagon banned defense contractors from using Anthropic services in federal projects.

Also read || AI Flunks GAIA & HLE: Real Limits Exposed

What is inside the legal arguments?

Anthropic alleges that the Trump administration's actions are "unprecedented and unlawful." In its 48-page complaint, the company argues that the government is wielding its power to punish a private entity for its "protected speech" and policy positions on AI safety. Anthropic claims the government never alleged that Claude was technically insecure; the "supply-chain risk" label—typically for foreign adversaries—is being used for illegal leverage in a contract dispute.

The lawsuits highlight a lack of due process, claiming the "Secretarial Order" deprived the company of its property and liberty interests without a fair hearing. The company's lawyers argue that no federal statute authorizes the Pentagon to block a domestic U.S. company simply because it refuses to comply with ideological demands on AI usage. By taking this matter to court, Anthropic is asking judges to undo the designation and stop federal agencies from enforcing what it describes as an unlawful campaign of retaliation.

Also read || How AI is Redefining Social Media Engagement In 2026!

What is at stake for Anthropic?

The financial stakes of this blocklisting are massive, with Anthropic's valuation recently estimated at $380 billion. Court filings state the government's actions threaten "hundreds of millions of dollars" in current contracts and future deals, including disrupted negotiations worth roughly $180 million due to reputational damage from the "risk."

This case will set a monumental precedent for the future of the AI industry and its relationship with the state. While OpenAI struck a Pentagon deal allowing "lawful" AI use with technical safeguards, Anthropic's resistance marks a rare stand by a tech firm against military demands. The outcome will determine whether private companies or the federal government has the final say on the ethical boundaries of AI deployment in warfare and national security.