Above: Sam Altman, CEO of OpenAI during the TechCrunch Disrupt 2017. Photo by TechCrunch (CC BY-2.0) on Wikimedia Commons

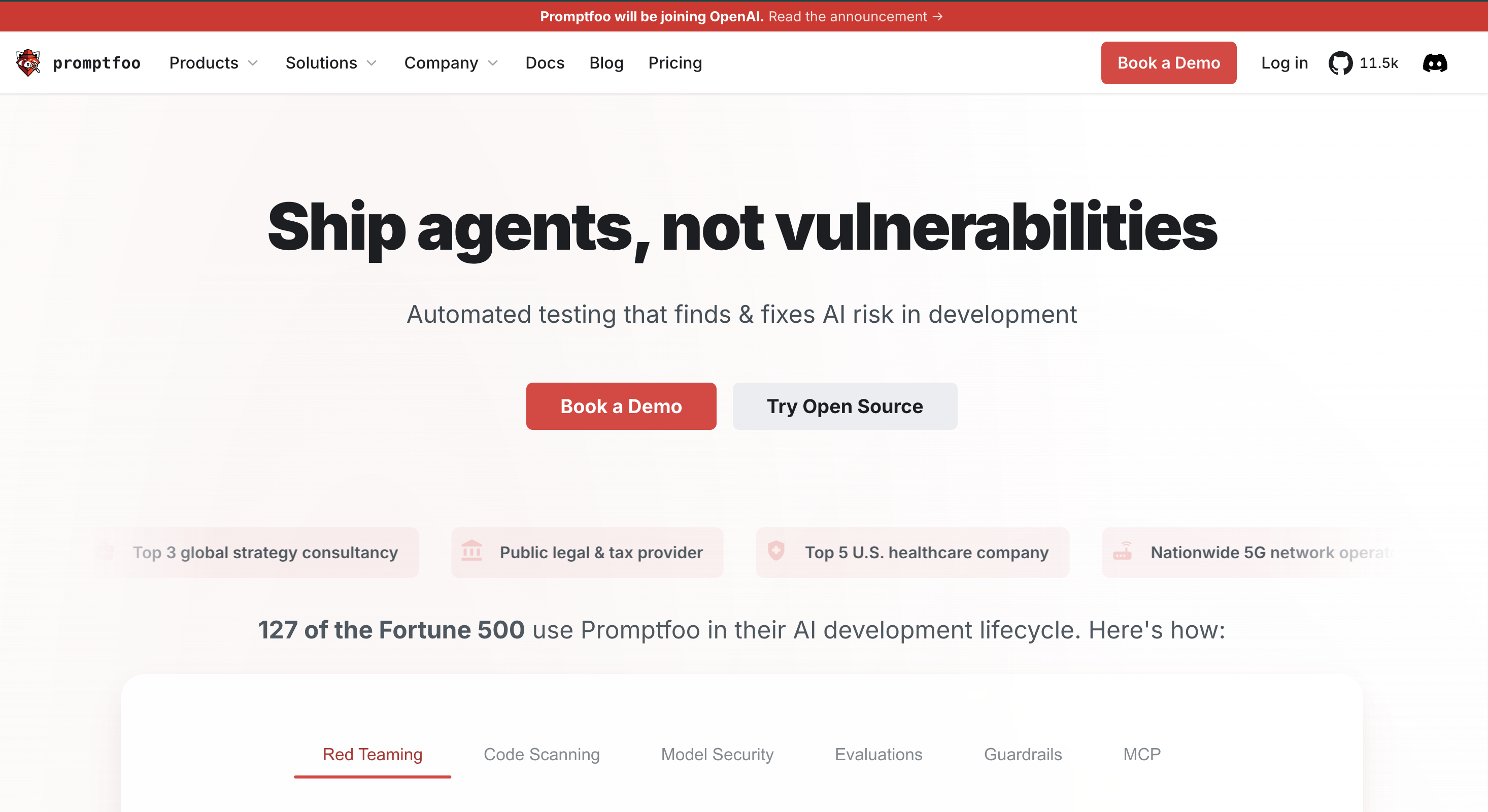

The artificial intelligence landscape is moving at a lightning-fast speed. Whether it is Humanity's Last Exam or NVIDIA's new AI inference chip, the AI world is evolving every second. The leading tech giant OpenAI recently made a revolutionary move by announcing its plans to acquire Promptfoo, a specialized AI security platform.

But why is OpenAI on an acquisition spree? The Bay Area giant is transitioning from a pure research lab into an end-to-end AI ecosystem leader, so it can control the entire AI stack (data, infrastructure, apps, and hardware) rather than solely focusing on model development.

Acquiring Promptfoo is not merely a business transaction. It shows a major turning point in how we ensure AI models are safe, reliable, and effective. Whether you are an experienced engineer or a young tech enthusiast, understanding this acquisition is vital for grasping the future of AI agents.

Also read || How AI is Redefining Social Media Engagement In 2026!

Promptfoo Explained: Key AI Testing Tool

Moving forward, let us understand what exactly Promptfoo is. And why are many developers talking about it? In simpler terms, it is an open-source testing tool designed to evaluate LLMs (Large Language Models). Simply, it is like a laboratory for AI developers, allowing them to test cases against models.

With Promptfoo, developers don't have to rely on guesswork and can systematically measure the quality, accuracy, and performance of their AI interaction through consistent, automated data benchmarks. The platform is crucial for tech as it brings scientific rigor to the art of prompt engineering.

Promptfoo allows engineers to catch errors easily, track performance changes over time, and compare different model outputs side-by-side. When companies are deploying AI, this level of scrutiny is mandatory for success. Hence, it changes how teams operate and perform testing, ensuring the final AI product behaves exactly as intended with minimum risks or errors.

Moreover, it simplifies the complex process of "red-teaming," which involves intentionally trying to break the AI to find vulnerabilities. It provides a framework to run thousands of test prompts rapidly, checking for issues like hallucinations, data leaks, or policy violations. Thus, it saves time for developers.

Also read || State of the Union 2026 Ignites AI Boom

OpenAI Bolsters AI Agent Security

After understanding this tool, let us decode how it impacts the AI ecosystem. OpenAI will integrate this technology into its Frontier platform, which it announced last year. Thus, creating a secure environment for building AI coworkers. With this integration, businesses can deploy AI agents without worrying about security gaps, allowing them to scale operations rapidly and reliably.

Essentially, OpenAI is moving from reactive patching to proactive defense. Instead of waiting for a "hacker" to find a flaw, they use automated stress-testing tools (like Promptfoo) to simulate attacks. This lets developers catch prompt injections—where someone tries to "trick" the AI into breaking its rules—before the software is ever launched.

For businesses, this shifts the focus to governance and auditability. It's no longer a "black box"; new reporting features provide a paper trail of how the AI reaches its conclusions. By making the AI's logic traceable, companies can prove they are meeting legal standards and keep a "human in the loop" to maintain total control over the system.

Also read || What Skills Do 2026 Graduates Need For An AI-Driven Job Market

AI Safety Careers on the Rise

Every tech worker is concerned about whether they'll be relevant in the future or not. This acquisition shines a spotlight on an emerging and critical career field, which is AI Safety and Evaluation Engineering. The modern-day students or aspiring engineers not only need to build a functional app, but must demonstrate that their software is secure, ethical, and rigorously tested.

The trends indicate that tech giants are investing heavily in the infrastructure of trust. Hence, knowing how to test and secure AI models is becoming a fundamental skill for developers. The best part of the future is that these powerful tools will be developed in the open, ensuring that every hobbyist, developer, or high-school student has access to the same tech used by Fortune 500 companies.

Eventually, this acquisition signals that the era is changing. You not only have to move fast and break things, but also move fast and test everything. So, you can get quicker results. Keeping an eye on these evaluation tools and mastering prompt testing and system verification will give you an edge in your future tech careers. And the future belongs to those who prepare for it today.