Instagram has introduced a new safety feature that will alert parents or guardians when their teen repeatedly searches for content related to suicide, self-harm, or other distressing topics on the platform. The update was announced by Meta on February 26, 2026, and is part of the company’s ongoing effort to improve online safety for young people.

What the New Feature Does

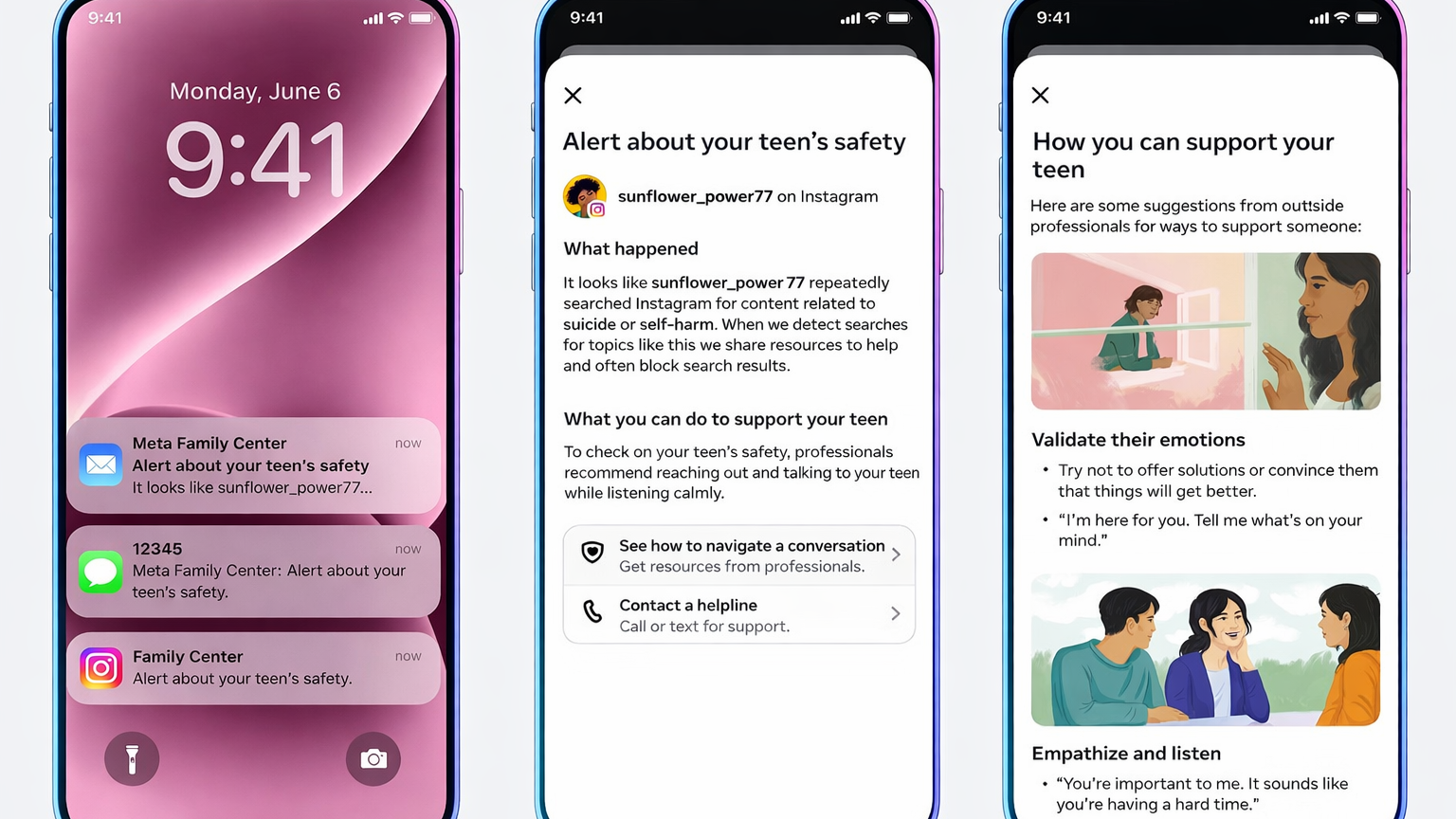

The new alerts are designed to help parents understand when a teenager might need emotional support. If a teen searches for harmful or hurtful terms multiple times within a short period, Instagram will send a notification to their parent or guardian, letting them know that repeated search activity may be a sign their child is struggling.

Meta said the alerts are meant to encourage constructive conversations between parents and teens, not to punish or shame teens for looking up sensitive topics. The company emphasized that most teens do not search for this kind of content, and when they do, the platform already shows support resources instead of harmful material.

How the Alert System Works

The alert feature is part of Instagram’s parental supervision tools. For parents to receive alerts, both the parent and the teen must opt in by enabling supervision settings on the teen’s account. Instagram accounts for users aged 13 to 17 can be included in this supervision if families choose to use the tools.

When repeated searches for harm-related terms are detected:

-

Instagram sends a notification to the parent.

-

Alerts may be delivered through email, text message, in-app notifications, or messaging apps.

-

Parents receive links to expert-backed resources to help guide discussions with their teen.

Meta worked with outside safety and mental health experts to determine what level of search activity should trigger an alert, aiming to reduce unnecessary notifications while still flagging potential concerns.

Existing Safety Measures Instagram Uses

Before the introduction of the alerts, Instagram already took steps to protect teens from harmful content. When a teen searches for distressing topics, Instagram blocks many harmful results and shows links to support resources and crisis help lines instead. These resources appear directly in the app to connect teens with help quickly.

The new alert system does not replace these protections but adds another layer of insight for families so parents can be aware when a teen continues to search after seeing the support resources.

Rollout and Availability

Meta said the alerts will begin rolling out in the United States, the United Kingdom, Australia, and Canada in early March 2026. The feature will be available to more regions later in the year. Instagram plans to expand the capabilities of the supervision tools over time.

Why This Matters

Teen online safety and mental health have become major topics of concern for families, policymakers, and regulators around the world. Social media platforms like Instagram have faced scrutiny over how algorithms and content exposure might affect young people’s well-being. By introducing parental alerts, Meta aims to give families another tool to notice warning signs and support teens before issues escalate.

Meta also said it is exploring additional ways to expand alerts related to artificial intelligence interactions when teens discuss sensitive topics with AI tools on its platforms.