Love it or detest it, artificial intelligence is always in the headlines. Whether we are talking about layoffs, automation, academia, or warfare, AI is expanding beyond our imaginations. A global team of researchers has developed a new benchmark called "Humanity's Last Exam." This benchmark will include 2,500 expert-level questions across the humanities, mathematics, natural sciences, and other specialized fields to test capabilities that existing systems struggle to handle.

Before Humanity's Last Exam, benchmarks like the Massive Multitask Language Understanding (MMLU) exam were more efficient for testing model capabilities. Nevertheless, the strong AI systems now perform so well that they reveal less about the current limitations of artificial intelligence. Thus, Humanity's Last Exam is the new, harder alternative, with questions screened so that items answered correctly by leading models were removed from the final set.

Why Standard AI Tests Are Failing

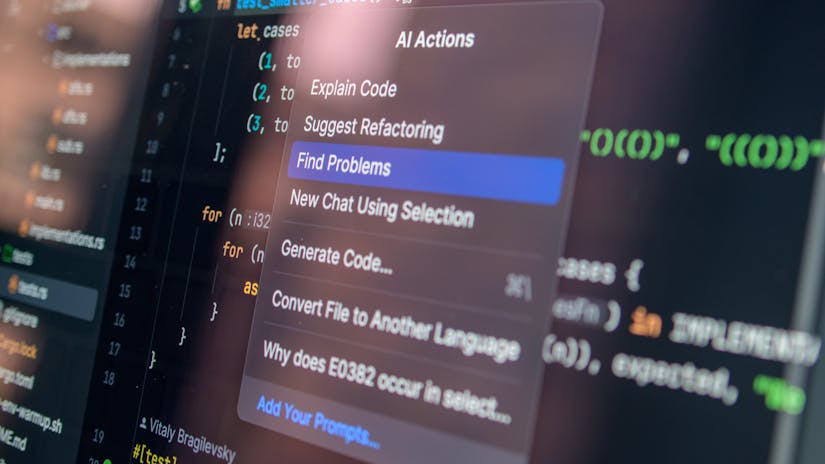

While most current AI benchmarks focus on academic knowledge or complex reasoning, they do not reflect the real-world utility. For example, an AI might pass a legal bar exam or solve a professional-level coding problem without actually understanding how to navigate a simple website or book a flight. Why does it happen? It happens because traditional tests allow machines to "cheat" by memorizing answers from AI training data rather than using real-world reasoning. That's why researchers realized they needed a fresh approach that emphasizes real-world logic over rote memorization.

While most current AI benchmarks focus on academic knowledge or complex reasoning, they do not reflect the real-world utility. For example, an AI might pass a legal bar exam or solve a professional-level coding problem without actually understanding how to navigate a simple website or book a flight. Why does it happen? It happens because traditional tests allow machines to "cheat" by memorizing answers from AI training data rather than using real-world reasoning. That's why researchers realized they needed a fresh approach that emphasizes real-world logic over rote memorization.

Humanity's Last Exam: Pushing Academic Boundaries

Humanity's Last Exam offers expert-level depth, with rigorously screened questions spanning the sciences, mathematics, humanities, and other fields. Unlike MMLU, HLE eliminates solvable items to expose true reasoning gaps, demonstrating that most AI models fail dramatically compared to human experts. Check out the leaderboard (as of late 2025).

| Model | Accuracy ± CI | Calibration Error |

| Gemini 3 Pro | 37.52% ± 1.90% | 57% |

| Claude Opus 4.6 Thinking | 34.44% ± 1.86% | 46% |

| GPT-5 Pro | 31.64% ± 1.82% | 49% |

| GPT-5.2 | 27.80% ± 1.76% | 45% |

| Others (e.g., Grok 4) | ~25% | ~50-70% |

These sub-40% scores highlight why even frontier AIs falter on novel, non-memorized problems, complementing real-world tests like GAIA. Let's understand what the GAIA benchmark is. GAIA stands for General Artificial Intelligence Assistants. They are specialized open-source evaluation frameworks developed to measure the proficiency of AI agents in solving real-world, multi-step problems. For a human, GAIA tasks are conceptually simple, like finding a specific video or managing a multi-step scheduling request. However, AI requires different tools and reasoning steps to do these easy tasks. When you move away from purely mathematical or linguistic challenges, you can see how the "brains" of these digital assistants struggle when faced with everyday human logic.

Also read || How to Build a Complete AI Content Workflow: Step-by-Step

How the GAIA Benchmarks Work

The GAIA benchmark is unique because it has conceptually easy questions for humans, but technically demanding for machines. For example, it is easier for humans to surf the internet for a specific piece of information and analyze it from a human perspective. A machine will need tools and multiple reasoning steps to do the same. In GAIA, every question is designed to be clear, meaning there is only one correct answer, and it usually requires the AI to interact with the real world or browse the live web. For instance, you may ask the AI to find the specific date of a specific local event and then calculate how many days are left until it occurs. It requires AI to search, verify, and calculate in a synchronized workflow.

The GAIA benchmark is unique because it has conceptually easy questions for humans, but technically demanding for machines. For example, it is easier for humans to surf the internet for a specific piece of information and analyze it from a human perspective. A machine will need tools and multiple reasoning steps to do the same. In GAIA, every question is designed to be clear, meaning there is only one correct answer, and it usually requires the AI to interact with the real world or browse the live web. For instance, you may ask the AI to find the specific date of a specific local event and then calculate how many days are left until it occurs. It requires AI to search, verify, and calculate in a synchronized workflow.

To ensure the test remains difficult, the researchers ensured that the answers cannot be found simply by "guessing" from the text. Here, the AI must demonstrate multimodality, meaning it must understand text, images, and, perhaps, audio files simultaneously. Since these tasks involve many small, interconnected steps, a single small error in the beginning can lead to a completely wrong answer. This "All or Nothing" scoring system gives a very clear picture of whether AI is truly reliable or just fluky.

Also read || NVIDIA’s New Chip: The Secret Strategy to Own AI Inference

The Future of Human and AI Synergy

The results from initial GAIA testing show a massive gap between human performance and AI performance. Humans typically score very high on GAIA tasks, thanks to our common sense. On the other hand, the most powerful AI models currently score around 60-65% while humans hit 92%. Hence, there is a significant gap of basic conceptual framework between humans and AI. AI can write code or poetry but to navigate the messy, unorganized real-time data, humans have an edge of common sense.

The results from initial GAIA testing show a massive gap between human performance and AI performance. Humans typically score very high on GAIA tasks, thanks to our common sense. On the other hand, the most powerful AI models currently score around 60-65% while humans hit 92%. Hence, there is a significant gap of basic conceptual framework between humans and AI. AI can write code or poetry but to navigate the messy, unorganized real-time data, humans have an edge of common sense.

Moving forward, the goal of benchmarks like GAIA is not to "beat" the AI, but becoming a safer and more efficient partner to humans. When engineers identify these specific weaknesses, they can even improve reasoning capabilities, instead of just making the models larger. When you understand these limits, it helps you realize the significance of human oversight to handle the simple complexities of daily life.